Bookish Diversions: Use AI, Lose Your Book Deal—and Maybe More

‘Shy Girl’ Canceled; Garbage Prose; AI Everywhere! or Is It? Training Data; More

Shy Girl retreats. Horror author Mia Ballard is facing horrors of her own after publisher Hachette decided to pull her novel Shy Girl from publication. Originally released last fall in the UK, where the book sold about 1,800 copies, Shy Girl was slated to hit U.S. shelves this spring. But after allegations began buzzing that Ballard used AI to write the book, Hachette bailed in both countries.1

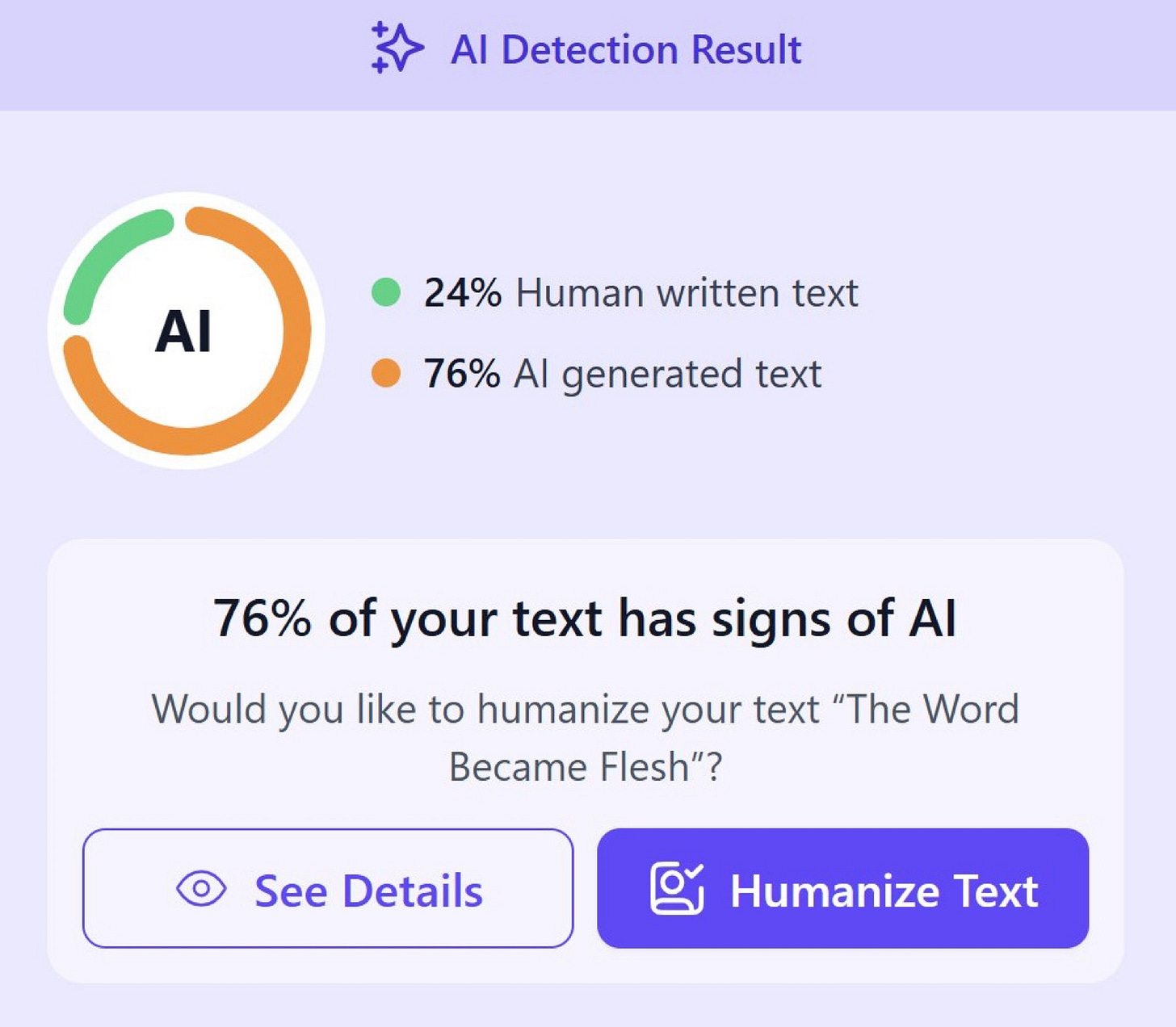

Evidence for the rumors? AI “tells” in the book—artifacts signaling text generated by a large language model, such as ChatGPT or Claude. (Maybe like that em dash just now.) “Readers began flagging what they surmised was A.I. slop,” according to the New York Times,

slamming the book for its generic and confusing metaphors and repetitive phrasing.

“Really bad,” one reader wrote in a one star review. “Pretty sure this was A.I. generated.”

Ballard denies the claim but admits, as the Times reported, AI was used when an acquaintance edited an early, self-published version of the book. Yeah, no. Speaking as an editor, that doesn’t pass the sniff test.

In a traditional publishing arrangement, the publisher reserves the right to edit the book as it sees fit. The author gets plenty of input but rarely the final say on the edits; the publisher has to protect its investment, after all. But that wouldn’t hold for a self-published book.

We don’t know how the editorial process worked in this case, but if the editor’s pass left enough AI residue to deep-six the project, that would indicate (a) the editor overplayed their hand in altering the work and (b) the author didn’t object or correct their meddling. At best, that suggests this editor ought to field IT help-desk calls instead of abusing manuscripts and that Ballard has no sense of her own style. She didn’t object to the editor ruining her book?

¶ Garbage prose. As I was digesting the Ballard story, another landed on my plate. On X, Becky Tuch flagged a New York Times piece from last year with suspicious formulations. The article involves a child-custody battle and its aftermath on a fraught mother-son relationship. Here’s the bit Tuch quoted:

Not hate. Not anger. Just the flat finality of a heart too tired to keep trying.

That’s when I stopped fighting.

I didn’t give up. I shifted.

I stopped thinking love was something I had to prove with court documents and supervised visits and legal bills. I stopped chasing every possible way to make him see I had changed. I started focusing on actually changing.

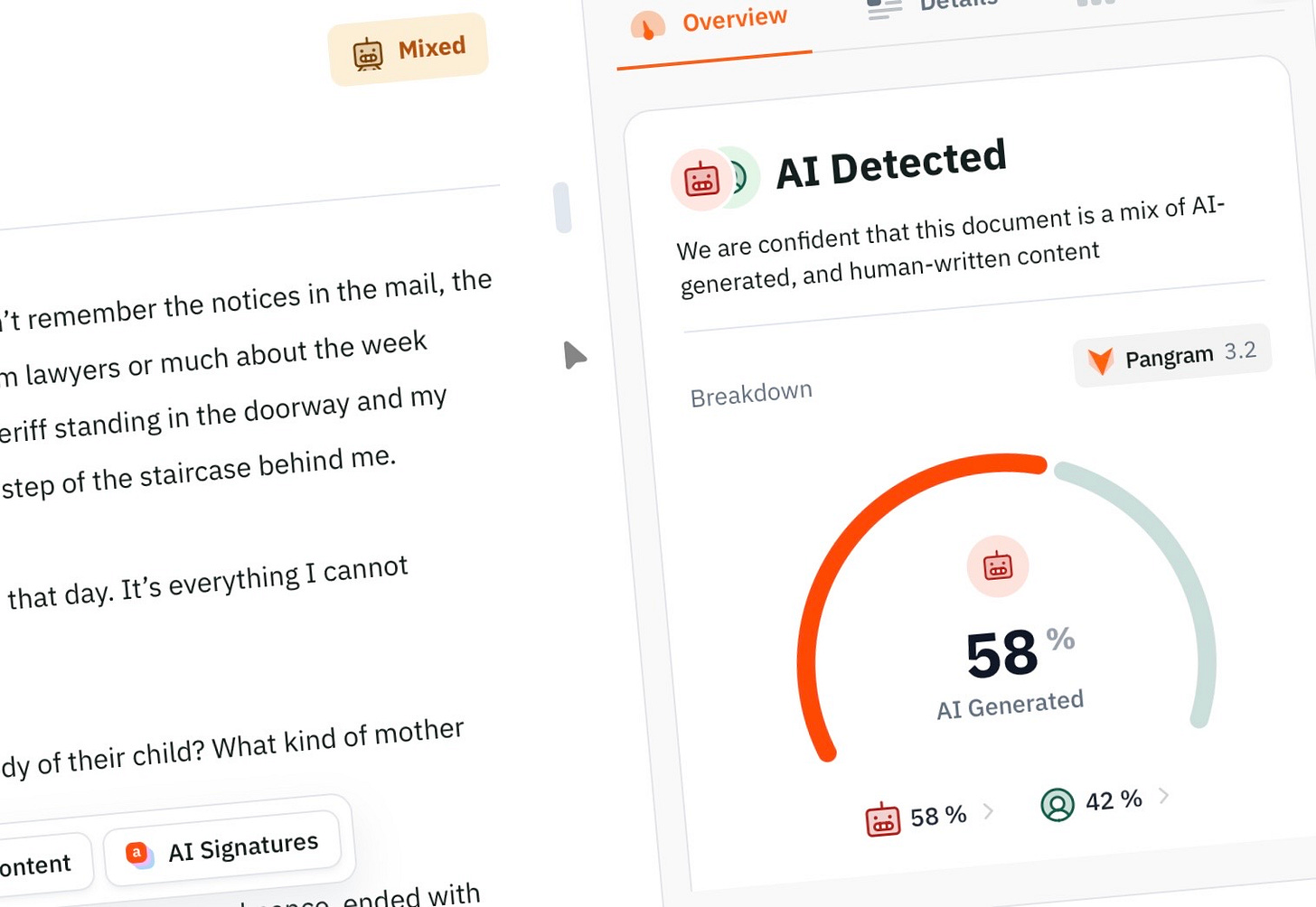

I thought so too. I ran the full piece through the AI detection service Pangram—caveats below—and it came back as 58 percent AI generated with about a third of the text tagged as “high confidence” it was AI. That said, you don’t need an AI detector to wince when you read the excerpt; the surprising thing is that the Times editor didn’t wince.

It’s not like the offending material is subtle, and there’s plenty more of this in the piece. Picking up where Tuch’s excerpt leaves off, we have:

I stayed sober.

I stayed steady.

I stayed available.

I learned not to fill the silence with explanations or apologies. I stopped trying to fix things and started showing up in quiet, invisible ways. And over time, the texture of our contact began to shift.

We still had the occasional visit, the birthday phone call, the expected check-ins that had always felt slightly choreographed. But now, something else started to surface. Subtly. Slowly.

Tuch was quick to say, “I don’t want to falsely accuse writers of AI-use. But”—and who could disagree?—“this reads EXACTLY like AI slop.” I can appreciate her reticence to throw someone under the bus. I share it. What I share less of is the concern with whether she used AI in writing her piece, or Ballard in her book.

I personally don’t have any significant problems with that, though I get the ethical issues at play. I also get why people object to AI writing; nothing against that position. What does bother me, however, is the prose itself. I feel empathy for the writer and the plight she describes. I feel annoyance at how she chose to describe it. Shouldn’t I? It’s bad.

There might be a time and place for that sort of stuttering, staccato delivery with its single-phrase paragraphs and single-word sentences, hoping—vainly—to land every thought with gravity and earnestness, not to mention the faux poetry (fauxetry?) of phrases like “texture of our contact.” Since when does texture shift?

Maybe a few writers can pull it off, but everyone else is mimicking the style and producing garbage in the process, especially as it proliferates through low-threshold publications and social media. AI lets people create content faster than they can think of what they want to say or how they want to say it, and it shows.

I asked Claude what it “thought” about the passages in question. Even AI knows the writing stinks:

What you’re identifying is a specific degeneration of paratactic prose—that staccato, anaphoric, fragment-heavy style where every sentence gets its own paragraph, parallelism substitutes for development, and whitespace is doing all the emotional heavy lifting. . . . It’s pure pattern: anaphora, short declaratives, lexical repetition, abstract nouns (“silence,” “texture,” “shift”). . . . The style has become its own content, which is more or less the definition of kitsch.

Ouch. It’s also ubiquitous now.

¶ AI is everywhere! The writer Vauhini Vara expanded on Tuch’s tweet in the Atlantic, exploring how newspapers and magazines are increasingly publishing AI-generated or -inflected work. Vara points to a preprint edition of a paper looking at the prevalence of AI in the news.

The researchers, as they say in their abstract, analyzed 186,000 digital articles from 1,500 U.S. newspapers published last summer. “Using Pangram, a state-of-the-art AI detector,” say the authors, incidentally the same service I used above, “we discover that approximately 9% of newly-published articles are either partially or fully AI-generated.”

They also audited opinion pieces from the New York Times, Washington Post, and Wall Street Journal. They found the op-ed pages are “6.4 times more likely to contain AI-generated content than news articles from the same publications, with many AI-flagged op-eds authored by prominent public figures.”

Being more likely among public figures makes sense. Those same people often have teams that handle all or most of their writing: speeches, articles, blog posts, social media posts, whatever. Under pressure to produce, those teams might turn to AI now that it’s available. It’s like having an extra staff writer who doesn’t complain about the deadline or the workload.

Of course, AI can’t care about the product either. As with Ballard, no checking on the work, or no editorial sense to begin with?

¶ Or is it? At the same time we’re primed to look for AI wherever we turn, we have access to AI-detection tools that render this process as simple as cutting, pasting, and hitting a button. A report awaits in seconds. I used Pangram with the Times piece; the study cited above did too. There are many others. But are they accurate?

Studies, like this one, raise questions. After the Ballard story, social media erupted with people stumping the detectors. Reports flagged such work as the opening bits of Genesis, St. John’s Gospel, and chapter 5 of Mary Shelley’s Frankenstein as AI generated. Could you even get a ChatGPT account in the first century A.D.? Must have been a very early model.

I’ve seen stats on AI in books, and the estimates are smaller than many might fear. That checks because, even with AI, producing a book that anyone would want to read requires an immense amount of patience and (at least a little) human intelligence. We should be wary of witch hunting, as Lewis said upon outing St. John. At minimum, I think it gets us focused on the wrong thing.

¶ Training data. The reason for these false flags? AI was, of course, trained on prose like St. John’s and Mary Shelley’s. Not to mention countless other writers who use rhetorical devices in their prose that AI slurped up and now regurgitates. AI doesn’t have a sense of style or the writerly chops to know how and when to use the various techniques available to it. This can be overridden with effective prompting on the front end and editing on the back, but AI’s native voice reads like someone still learning to write.

Humans whose sense of style is impaired by . . . simply not having any make the same mistakes. This is Liza Libes’s gripe:

Of course no one at Hachette caught that it was AI-written—because AI writing is virtually indistinguishable from all the other MFA slop they like to push out.

For those of you who are outraged that Hachette published an AI-written novel over the many wonderful human submissions they receive, I have one message for you: this is what happens when you strip writing of its soul and put minimalist gimmicks on a pedestal, insisting on cutting long sentences and eliminating all digression from literature.

Whether you’re human or machine, if you don’t know how a screwdriver works, you might use the butt end to pound nails. This is a larger reason why, for me, style trumps provenance as the primary consideration.

As a long-time editor—going on three decades now—I’ve waded through gobs of slop created by genuine humans with fingernails, DNA, and everything. Can you blame the machines? They mostly learned it from us. Editors at Hachette and the Times are so used to reading it they evidently can’t tell if it’s human or AI.

¶ Hollis to the rescue! So far, I’ve encountered no one who gets the semantic and stylistic issues of AI prose more than Hollis Robbins, nor anyone so helpful in explaining the problems. Since last summer she’s published several essential pieces that explain why AI doesn’t write like humans do, including the stab-me-in-the-face “it’s not X, it’s Y” construction.2

Most recently, Hollis has covered a personal pet peeve: that LLMs—not to mention everybody on social media and New York Times editors, apparently—don’t get what paragraphs are for. If you’ve ever seen Murder by Death, you might remember Truman Capote’s Lionel Twain yelling from the head of a moose at Peter Sellers’s Sidney Wang about his broken English: “Say your goddamn pronouns!”

That’s how I feel about sentence-fragments passing for paragraphs. Do it more than a few times, and I’m ready to yell: Paragraphs are free! Available to everyone! Use them!

Humans have ways of formulating texts that AIs don’t. We’re semantic, they’re statistical. When we rely on AI to generate words, we’re leaving out what we, as humans, can uniquely do.

Personally, I don’t care that much when it comes to marketing copy or business reports, especially if a human has bothered to ensure it’s readable and makes sense to other humans. I’ve read plenty of AI-generated text that was perfectly serviceable for its intended use. And I know people who put a tremendous amount of effort into ensuring their AI output reads well. Some of it does.

But then there are those who want to write but can’t, or don’t have time, or are behind on their latest Apple TV series. They see what AI can do and think they’ve found the holy grail. And I suppose it might be if they don’t care about the quality of whatever dribbles out. They are, after all, usually the least equipped to judge whether the product sounds like something humans want to read. It likely isn’t. And, as Jeff Goins points out, readers can tell.

Not just because of the standard tells, like em dashes (out of my cold, dead fingers!), x/y constructions, and beating the rule-of-threes to death—by the way, I just came dangerously close to committing all three offenses. It’s more than that. AI writing doesn’t sound human because it doesn’t use language the way humans do.

Go back to the complaint about Ballard’s writing, full of “generic and confusing metaphors and repetitive phrasing.” As Jeff says, “When I’m reading a piece of AI writing, I realize about halfway through: ‘Wait. Half of these sentences don’t need to exist.’” AI doesn’t know that. For all sorts of reasons, humans do. Or should.

¶ The human exclusive. I have more ideas for novels than a reasonable person should have. I’ve played with AI in developing concepts. If there’s one thing I’ve learned in that process, it’s that AI can’t do it very well, or I don’t know how to get decent outputs. But I don’t think it’s the latter.

Fundamentally, I don’t think AI can write literature; it doesn’t know what it is because it lacks the necessary training data: suffering, joy, frustration, boredom, angst, despair, exultation, and all the other stuff Dostoevsky throws around like he’s lived a hundred lives. And humans can read what writers like Dostoevsky produce and sense nuances of form and effect that AI can’t grasp.

If I ever write a novel, it’ll be because I finally figured out how to do it on my own. But the next question is, why would I want it to write for me in the first place?

¶ Writing as secondary. People have messages to share, information, ideas. And they don’t always have the time or knack to get it down on paper. Remember those “prominent public figures”? They’re only a boisterous eddy in that vast, teeming sea of humanity. LLMs can help. All these folks are using it already, and some do it really well. But in such cases, the writing itself is somewhat secondary to the purposes they have in mind.

I use AI in my work every day. I’m the chief content officer for Full Focus. I’ve used LLMs (usually Claude) to automate various parts of workflows. It helps me run NPS reports. I built an entire business plan for a product using ChatGPT’s deep research feature. It took me about a week; had I done it unaided, it would have taken me four times as long. I can give it access to spreadsheets and databases, and it’s far better at processing all of that than I am unassisted. With the right nudges, I find it’s also serviceable for synthesizing, copyediting, and proofing documents. Prompts matter, so mileage may vary.

But! And this is a mammoth but: I’m also a fan of the humanities. I love literature. I love writing. I read about 80 or 90 books every year. I read and write every day, have since I was a teenager. I don’t want AI doing my thinking and writing in that domain. It’s not secondary for me. I’ll use it—and do—to research, to challenge my understanding, to test ideas, to catch my typos when I remember to check.3 I want AI to handle all the drudgery of my work, especially since it does some of it better than I can, but I don’t want it to do something that gives me immense joy and satisfaction.

¶ The risk of deskilling—and something worse. Speaking of Full Focus, we have a couple of excellent podcasts. My wife and father-in-law host one, the Double Win Show. They recently interviewed Nicholas Carr and this question came up. A couple of his comments are worth sharing here.

On the dangers of offloading writing, after discussing some AI upsides:

There’s also a bad way to use it, which is simply to offload everything onto the machine. So, oh, I don’t need to read anymore. I can get AI to gimme a summary. I don’t need to write anymore. I can get AI to crank something out. And then the danger is . . . you stop practicing things that are actually important, communicating, forming your own thoughts, reading, making sense of what you read.

On drawing a personal line:

I’m a writer and one of the first things AI has been adopted for is doing your writing for you. And it’s quite good, you know, for a lot of students, I think they can pump out a paper with AI that’s better than they could do, frankly. And so it’s very, very enticing. But what I realized is, you know, this work, the work of writing is very important to me. And so I’m gonna draw a line there. . . . I’m not gonna ask AI to write stuff for me. Even if I get stuck as I do quite often and have to really work on the words and try to get them right, I’m not gonna bypass that struggle because I think ultimately, even though it takes more time, and it can be frustrating, when I get to the end of it, I produce something better, something that’s more my own and something that’s more satisfying to me.4

Deskilling represents a real problem, especially for those who haven’t quite built the skills in the first place (back to those students Carr mentioned). But the other worry concerns me more: losing the satisfaction of having written something worthy of personal pride—not to mention the sheer joy of doing it. Writing is one of my favorite things to do, not not do.

¶ Authors can do what they want, readers can too. For those who want to have a book with their name on it more than they want to write a book, AI is a perfectly capable tool, provided they’re up to the task of making it read like something humans would enjoy passing their eyes over. If they don’t care—or even know what it would mean to care—they can publish whatever they want as is.

Traditional publishers, who have business interests to protect, won’t want it. And such authors shouldn’t be surprised if readers don’t either.

If you enjoyed this post, please hit the ❤️ below and share it with your friends (preferably human).

Not a subscriber? Take a moment and sign up. I’ll send you my top-fifteen quotes about books and reading. Thanks again!

Publishers are antsy about AI in their books for reasons beyond ethical considerations, starting with the problem of copyright. AI generated text isn’t copyrightable, and once you throw that into jeopardy, there goes the entire business model (such as it is). The Times ran a helpful followup to the original Ballard story that gets into some of the industry questions.

Here’s an example of what Hollis is talking about: LLMs are lousy rhetoricians. I recently read a report written by AI intended to position an uncomfortable reality to a person. The LLM was used to synthesize dozens of reports and datasets into something digestible and persuasive.

It did an okay job—in certain regards, amazing; we’re talking about a very complex analysis—but I had to edit heavily because the document was working against its own purposes. And it goes back in part to Hollis’s complaint about defaulting to the “It’s not X, it’s Y” framing.

In saying it’s not one thing, it’s another, it kept suggesting negatives that worked against the positive case. If this were a persuasive speech given in a high-school debate class, you’d ding the presenter’s grade over these mistakes. They were rookie. But the LLM didn’t know any better because it doesn’t have emotion or judgement, and persuasion depends on both.

Perplexity was, for instance, invaluable for the research and number crunching of my recent piece on nonfiction sales. Steven Johnson’s work with NotebookLM, which he’s helped develop, is informative on this point. And I talk about the role of AI as a research assistant in my history of the book, The Idea Machine.

There’s also the concern that AI distorts written communication. As a side note, those Carr quotes are from an AI transcription. It’s obviously helpful for some things.

Thank you Joel! I love this: "I’m ready to yell: Paragraphs are free! Available to everyone! Use them!"

"Murder By Death"- this Neil Simon fan approves...